| Version 35 (modified by , 14 years ago) ( diff ) |

|---|

Multiple Controllers (And making them cooperate).

This page documents the process of designing a multi-controller OpenFlow architecture where the controllers actively collaborate to emulate a network stack for experiments.

This "logging" method is based on a relatively effective method developed during a GSoC 2012 project.

Quick Links.

Overview

Logistics

Subpages - extra notes related to this page

Logs - records of work

week1

week2

week3

week4

week5

week6

week7

week8

week9

week10

week11

week12

week13

Overview.

The basic architecture for an OpenFlow network is a client - server (switch - controller) model involving a single controller and one or more switches. Networks can host multiple controllers. FlowVisor virtualizes a single network into slices so that it can be shared amongst various controllers, and the newest OpenFlow standard (v1.3.0) defines equal, master and slave controllers to coexist as part of a redundancy scheme in what would be a single slice. A multi-controller scheme that isn't really explored yet is one where each controller has a different function, and cooperate.

Cooperation is a fairly complex task, for several reasons:

- There must be a communication scheme so that the controllers can cooperate

- A single protocol suite can be divided amongst controllers in various ways

- The information being communicated between controllers changes with protocol suite and division of tasks

Developing a general solution to this problem is difficult, so focus will be narrowed down to a more specific task of producing a cooperative multi-controller framework for running a topology configuration service split into a global and local component, and an experimental routing protocol with ID resolution.

Logistics

Work Setup

The Mininet SDN prototyping tool will be used for testing and debugging implementations. The implementation will be based on modified versions of OpenFlow Hub's Floodlight controller.

Proof-of-concept experiments for evaluating the architecture will be run on ORBIT sandboxes.

Timeline

An approximate 3.5 months (14 weeks) is allotted for design, implementation, and evaluation. the ideal split of time is the following:

- 3-5 weeks: architecture layout, topology control, context framework

- 3-4 weeks (end of: week 6-9): integrating routing/resolution

- 3-4 weeks (end of: week 9-13): evaluation

- remainder (end of: week 14): documentation

This schedule is a general outline and is used as a guideline; the actual work-flow will inevitably change.

Subpages

- A Thought Exercise: A "prologue" (ASCII text) page on thinking about multi-controller architecture design to get back up to speed after a hiatus.

- Floodlight Internals: A summary of Floodlight internals.

Logging.

(9/5):

keywords: hierarchy, collaboration.

We are set on having a weekly meeting, starting with this one.

The consensus is that a general architecture is beyond the scope of what's possible within the available timespan. The design is simplified to a narrower case:

- controllable topology (mobility as a change in topology). A global controller with a view of the network initially configures the topology. Lower (local) controllers can detect topology change and either update the global view or handle it themselves.

- controller per service and switch

- hierarchical relation between controllers. with less active protocols higher up

- information distribution mechanism between controllers, namely service location (topology). Certain controllers may add context to port, depending on what is attached to them. The topology controller must be able to convey the location of the service to that controller. Possibly an request/reply/subscription based scheme, the subscription being useful for events (topology change).

The course of action now is to look for the following:

- Basic topology control

- Test case of mobility/handoff/flow switching (OpenRoads)

- Mechanisms that can be used to exchange service/event information

(9/7):

Added a good amount of content to Floodlight Internals.

We need to define inter-controller communication. At the basic level controllers can communicate by passing OpenFlow messages amongst themselves. This is like a not-so-transparent, upside-down version of FlowVisor.

(9/9):

The primary steps of concern seem to be the following:

- create a controller that can connect to other controllers

- pass messages between them (get one to send something to other)

- intercept and use messages (get recipient to act on message)

- make this symmetric (for request/reply/update)

somewhere along the way, we need to devise a service type message/servicetype codes that can be meaningful. For now it is probably a good sanity test to get two controllers to connect to each other, akin to what FlowVisor does.

The easiest approach may be to create a module that opens up its own connection, exporting a "switch service" that handshakes with a remote controller.

(9/10):

Beginning of creating a basis for controllers that can be connected together. Tentatively calling the controllers that are going to be part of the hierarchy "units", as in, "units of control".

This involved taking OFChannelHandler and creating something similar to it, for connections from (down-links to) other controllers. At first represented connections from units as their own implementations, then figured this was problematic if the packets from the controllers are to be handled by the modules. This means it makes sense to represent the connections from other controllers as OFSwitchImpls.

Two new mock-OFProtocol types were created for inter-unit messaging.

- OFUnitServiceRequest: request for list of services from unit

- OFUnitServiceReply: relevant service information

The service reply will probably communicate a service type (topology, network storage, etc.) and a location to find it, such as port on a datapath where a NAS is located (if network storage). This is basically a way for the network to advertise location of services to topology-aware elements, as opposed to a server attached to a network doing the adverts for hosts.

(9/11)

Some work was done to develop a general architecture of a single controller, tentatively named a "Unit". A unit can handle both switch connections as well as controller connections. Controller connections come in two flavors:

- upstream - outgoing connects to a remote one, used to pass a message to higher tiers

- downstream - incoming connections from a remote one, used to return a result to the lower tiers after processing

Incoming connections are made to a "known" port. Two controllers connected to each-other via both are adjacent.

End of week1 :Back to Logs.

(9/12)

Several points were discussed.

- Same versus different channels for switches and controllers. Same channels for both implies a need for a strictly defined protocol between controllers, and a more sophisticated upstream message handler for the channel. Different channels allows the system to be more modular, and removes the need to develop the "smart" handler that may become cumbersome to debug and develop overall.

- Vertical versus horizontal inter-controller channels. Vertical channels are connections between controllers in different layers of the hierarchy, whereas horizontal channels are between those in the same layer. Horizontal channels may contain both OpenFlow control and derived messages. Vertical messages may not conform to OpenFlow, with the exception of the vertical channel between the first level controllers and switches (the traditional OF channel).

- Nature of non-conforming messages. The Vertical messages are "domain-specific", that is, only conform to rules that are agreed upon between adjacent layers. Therefore, some translation must occur if a message is to pass across several layers. Alternately, one message type may be interpreted differently across different tiers. An Example is one layer using a VLAN tag as a normal tag, and another, a session ID.

- Interdomain handoffs. The network may be a mix of IP and non-IP networks, governed by controllers not necessarily able to communicate - each should be able to operate in their local scope, facilitating the translation of outgoing messages so that it may be handled properly by the IP portions of the internetwork.

- Use case. A small setup of three switches, two hosts (one moving), and two tiers of controllers. The first tier may be a simple forwarding unit, and the second dictates a higher (protocol) layer logic - to keep it simple, authentication. The logical layout between the two tiers changes behaviors of the tiers:

- Tier two connects to just one tier one controller: the connection point must communicate the higher-tier's commands to others in its tier.

- Tier two connects to all tier one controllers: tier two in this case is a global 'overseer' that can actively coordinate, in this case, a handoff where permitted.

(9/13)

A second channel handler was added for inter-controller communication. This involves an addition of:

- a slightly modified ChannelPipelineFactory for the new channel pipeline (the logical stack of handlers that a message received on the channel will be processed by)

- a new ChannelHandler for the socket,

UnitChannelHandler, to the controller class - a new server-side channel bootstrapping object for this port (6644, "controllerPort") to the controller class

This page was used as a reference.

End of week2 :Back to Logs.

(9/20)

A topology file/parser were added. The file is a .json list of upstream and peer controller units (nickname:host:port triplet). This list is used to instantiate client-side connections to the neighbors.

The essential difference (as of not) between an upstream and peer unit are whether the client connection goes one way or both. Several ways to identify the origin of the message comes to mind:

- keep the original lists of neighbors, and find relation by lookup per message

- identify based on which message handler object receives message. The object:

- is identified as down, up, or peer (relation)

- manages outgoing connections to upstream or peer

- raw classification:

- if received on 6644 and no client connection to originator exists, it is from downstream

- if received on 6644 and a client connection to originator exists, it is from a peer

- if received on a high-number port and originator does not connect to 6644, it is from upstream

(9/21)

Realizing that the lack of understanding of the Netty libraries was becoming a severe hindrance, we inspect a few documents to get up-to-speed:

- Netty Channel handlers: http://www.znetdevelopment.com/blogs/2009/04/21/netty-using-handlers/

- Official Netty getting-started docs: http://docs.jboss.org/netty/3.1/guide/html/start.html

With the new resources at hand, we re-document the modifications done to the main Floodlight event handler (Controller.java) in order to intercept and respond to messages from both switches and controller units.

- The "server-side" channels. A control unit expects two types of incoming connections, 1) from switches, and 2) from other units (peer and downstream). These two are identified by default TCP port values of 6633 and 6644, respectively. The switch channel is handles using OFChannelHandler, which implements the classic OpenFlow handshake. The unit channel, which we add as the UnitChannelHandler to our Controller.java derivative class (UnitController.java), deals with the inter-unit handshake, which uses a modified version of OpenFlow. Both are initialized and added to the same ChannelGroup when the UnitController run() method is called.

- client-side channels. A controller unit with upstream or peer units will connect to other units as clients. Each client connection is handled by a UnitConnector, which attempts to connect to each remote socket specified in a topology file located, by default, under resources/ . Each entry describing a unit has the following form:

{

"name":"unit_name",

"host":"localhost",

"port": 6644

}

This can of course be easily changed, along with its parser (UnitConfigUtil.java) and structure holding the information for each entry (UnitPair.java). This file specifies peer and upstream units in separate lists, in order to facilitate the differentiation between the two kinds of server units. We define a unit that accepts connection to be a server unit, and ones connecting, client units. A separate channel handler is supplied for this connections.

However, it appears that all three channels use similar-enough channel pipelines, whose only differences lie in which handler is being used; therefore some work to be done in the future include a consolidation of the three PipelineFactories.

End of week3 :Back to Logs.

(9/26)

Things seem to be headed towards architectural experimentation. Three things to investigate:

- Radio Resource Management (RRM) : incorporate advertisements somehow, for between controllers

- Authentication : A entity that allows/denies a host to tx/rx on a network. This combined with forwarding elements provide a base foundation for collaborative multi-controller networks.

- Discovery : Controllers discover and establish links with, other controllers within the control plane, sans explicit configuration, like DHCP.

The first two are test cases to add credibility to a architecture like this one. In any case, the syntax for service/capabilities adverts must be defined.

The to-do'd for now:

- look up RRM.

- investigate process of host authentication.

- find examples of existing distributed controllers.

(9/29)

A quick survey for distributed controllers brought up several examples:

- Onix: A Distributed SDN control platform, which synchronizes controllers with a Network Information Base (NIB), which stores network state. Relies on a DHT + local storage. Oriented towards reliability, scalability, stability, and generalization.

- HyperFlow: A NOX application that allows multiple controllers to coordinate by subscribing to each other and distributing events that change controller state. Relies on WheelFS, a FUSE-based distributed filesystem.

- Helios: A distributed controller by NEC. Cannot find a white-paper for this one.

HyperFlow is what this (attempted) controller seems to resemble the most. However, the point to make is that this controller should not be just another implementation of one of these controllers, or their characteristics. The model for the currently existing distributed controller seems to assume that of multiple controllers that all run the same application, and to allow that one application to scale. This contrasts from the distribution of functions across multiple controllers e.g. having each controller run a different application to "vertically" distribute the load (Vertical - splitting of a network stack into several pieces as opposed to the duplication of the same stack across multiple controllers).

End of week4 :Back to Logs.

(10/4)

Seeing that the client-side channel implementation was not working as intended, a revision that can hopefully be worked back into the source was made.

As for the documentation aspect, several use cases for a multi-controller architecture that distributes tasks based on application (protocol) need to be devised. Some points made during discussion:

- Many networks contain various services that belong in separate "layers", which are maintained and configured by different groups, and may even reside on different devices.

- Viewing an analogy of a microkernel, where the various aspects of the kernel (w/r/t a monolithic architecture) are separated into processes that pass messages amongst themselves — various controllers may serve different protocols that make up a network stack. Not commenting on the performance of the microkernel architecture, but more so on its existence.

In terms of multi-controller networks, there are other cases that should be identified:

- OpenFlow 1.3 Roles: these are redundancy entities, with protocol-standardized messages to facilitate handoffs of switch control between controllers. The setup involves multiple connections originating from a single switch. The switch also plays a part in managing controller roles (e.g. must support this bit of the protocol). This is intended to provide a standardized interface, so it is not an implementation; Controllers must implement what these roles imply.

- Slices: these are designed to keep controllers that conflict with each other in separate pools of network resource, allowing each to exist as if they are the only controllers in the network. This effect is achieved thanks to a proxy, not collaboration between the controllers.

(10/5)

A test implementation of the switch-side handshake was added to the codebase, in a separate package net.floodlightcontroller.core.misc. This is a client-side ChannelHandler/PipelineFactory that generates switch-side handshake reply messages and ECHO_REPLIES to keepalive pings once it completes the handshake. This will be used as a base for the controller's client components.

Noting initialization, the handler's channel is set manually during client startup:

/*In client class*/

OFClientPipelineFactory cfact = new OFClientPipelineFactory(null);

final ClientBootstrap cb = createClientBootStrap();

cb.setPipelineFactory(cfact);

InetSocketAddress csa = new InetSocketAddress(this.remote, this.port);

ChannelFuture future = cb.connect(csa);

Channel channel = future.getChannel();

cfact.setHandlerChannel(channel);

This can probably be avoided if the handler is implemented as an inner class to the client class that fires up the channel, as in Controller.java.

(10/8)

With the base foundations mostly built, it seems to be a good time to document some of the components.

Component classes:

- ControlModuleProvider : the module that provides the controller class, based on FloodlightProvider.java

- ControlModule : the main controller class, subclass of Controller.java. Handles incoming connections from control plane and regular OpenFlow channels.

- ControlClient : the control plane client side event handler, one instantiated per outgoing connection.

- UnitConfUtil : Memoryless storage for topology and various other controller-related configurations.

- OFControlImpl : representation class for an incoming/outgoing connection to another controller, subclass to OFSwitchImpl.

Configuration files

- topology.json : stores topology information, e.g. neighbor controller information.

- cmod.properties : stores Floodlight module configurations for all loaded and active service-providing modules.

(10/11)

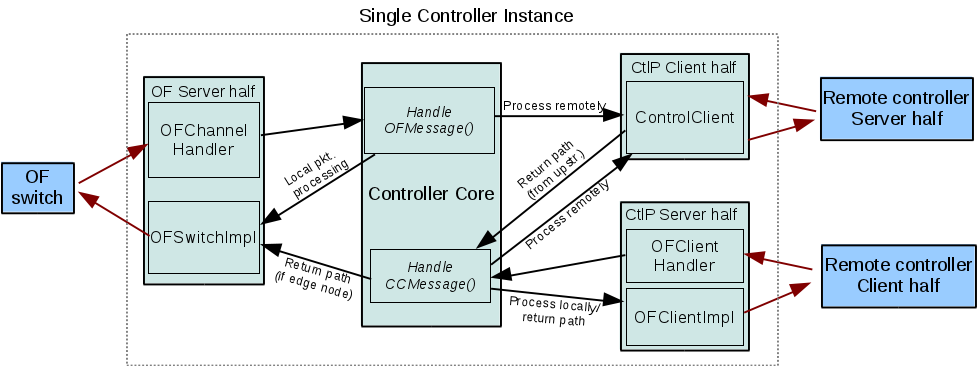

Some time was spent getting a better sense of the software architecture. The most recent organization looks like below:

This image attempts to summarize the required communication channels and message processing chain:

- Channels - OpenFlow (between switches and a controller), client/server connections between controllers on the control plane

- Message processing - local data processing, escalation/forwarding, and return path

In a sense, the main controller class is an implementation of a rudimentary kernel.

- Some more discussion on use case was had, in the context of different management domains with multiple controllers owned by separate groups, but on the same network. In the traditional network setup, each group would have to actively collaborate in order to prevent controllers from trampling each others' policies. For example, a central controller orchestrator, when allowing a user to administrate the network, would only permit the user to configure their own controller. The orchestrator would have to "know" which users can access which controller, and how that controller may influence the network.

End of week5 :Back to Logs.

(10/16)

The process chain was fleshed out further.

- remote connections appear like modules that subscribe to the events that they need for their service. For example, the authenticator would listen for any new device connections and departures, and not let packet_ins from the device to pass through further down the OFMessage chain if the host is not allowed (or inject drop messages for the denied host).

- since modules cannot be dynamically added, and we don't necessarily know how many connections will come in, the modules that squirrel events to other controllers will also accept arbitrary numbers of connections, and keep track of the mapping between message and channels.

(10/19)

- Added parser for controller service descriptions/requirements to the topology file. The configs for the controller are parsed, then pushed out as part of the service info exchange that controllers do with each other when establishing a connection. The aim is to be able to describe services in terms of the resources that it needs. The resources are in the form of events that the controller is capable of detecting and dispatching (messages, switches, and hosts/devices).

(10/20)

Preliminary decision to use Vendor extensions of OpenFlow specs for inter-controller service messaging, at least for controllers to propagate information about the services that they export, and what event information they require to properly run the service.

The base of a vendor message includes an integer to specify the data type, which allows one to use one "vendor ID" with many message types.

End of week6 :Back to Logs.

(10/24)

Some terms to keep things organized:

- Control plane : the collection of communication channels just visible to the controllers. Assumed to be isolated from the data plane, in which the switches and network hosts reside.

- network event : a message that notifies a controller of network state change, e.g. a switch joining the network, or a new OpenFlow message.

- Control entity : a SDN controller. May or may not be connected to switches but nevertheless capable of influencing network state by processing OpenFlow messages or its derivatives.

- Control plane server : a control entity that exports services to other controllers, and may subscribe to other controllers for network events. Also called "subscribers".

- Control plane client : a control entity that relays network events to control entities interested in them.

A server is configured with the information required to advertise its services and subscriptions. A single controller can also be both client and server.

Three unique control messages were defined using OF Vendor messages:

- OFExportsRequest : sent by a control plane client when initially connecting to a control plane server. Akin to an OFFeatureRequest.

- OFExportsReply : sent in response to a OFExportsRequest. Contains the names of the services exported by the server, and the events that it would like to subscribe to.

- OFExportsUpdate : sent by a server to its clients, when it receives (as a client) any new OFExportsReply messages from its servers.

The Reply and Update messages convey subscriptions as one of three categories:

- Device : network host related events such as a host join, departure, or address/VLAN change.

- Switch : OpenFlow switch joins and leaves.

- Message : OpenFlow messages distinguished by message type.

This reflects the event types that Floodlight recognizes by default, is well-defined, and is probably comprehensive enough to convey a very wide range of network events. The aim here is to define subscriptions as a combination of events from these event categories.

To keep things simple, of the Message category of events, focus will be placed on the Packet_In type (0x10), which is practically a catch-all for network host related messages. If more fine-tuned filtering is requires, a OFMatch structure may probably be used to describe the constraints on the types of Packet_Ins a subscriber may wish to hear about.

End of week7 :Back to Logs.

(11/2)

Concept work for the module front end to remote connections:

1.3 types of message processing chain behaviors, according to application type at remote end.

- Proxy : messages cannot be passed down chain until the remote module has processed things, and returned a decision. This includes firewalls and authentication.

- Concurrent : messages being processed by the remote module can also be processed by the others down the chain even while it is being remotely processed.

- Both : some modules may be required to wait for the results of the remote module, while others may work concurrently.

In terms of communicating the specific type, there can be a field that specifies preferred processing options in the ExportsReply messages.

2.Implementation wise, static modules makes dynamic connection additions difficult. In dynamic controllers, a module-per-connection may be added as connections are introduced, so tacking this problem is not considered to be a topic of interest. (However, it has to be done)

3.Flow entry merging is a nontrivial topic that is not covered due to its extensiveness. Once a flow entry is added, it is assumed to:

- aside from removal, never be modified

- not conflict with any others.

(11/3)

Process chain alterations based on request should be done at a per-event-category basis. The rationale comes from a few points, such as the how Floodlight processes events categorically, in separate modules, and not every event that may be subscribed to by one controller necessarily should be treated in the same manner. An example of this is for the authenticator, which subscribes to packet_Ins and new_device events; while packet_ins from a blacklisted host must not be processed by any other module capable of causing denied host traffic to be forwarded, new_device messages can be passed to other modules with little risk.

Several concerns are still up in the air, some of which may alter how sensible the above statement is:

- stub module(s) - one for each event category versus one for all categories. Former makes each simpler, but probably requires more context passing, while the latter makes shared data easier to manage but the module itself more complicated e.g. makes a second level scheduler necessary.

- multiple remote controllers with conflicting requests - For the same event. Strongly implies diversion should /not/ drop a event after it passes through one.

For starters, the most basic structure would be a diverting module(work on one at a time, the default dispatcher behavior)

End of week8 :Back to Logs.

(11/8)

The current state of the implementation is as follows:

- central configuration file for the advertisements sent by the controller

- single remote dispatch module that registers as a listener for devices, switches, and messages

- Vendor message-based control plane communication

Vendor message contents may be classified into initialization messages and service messages. The former are messages exchanged during an initial handshake between controllers, and the latter are messages used during normal operation e.g. for event messaging and returning processed results. In the interest of time, the controller-specific event contexts will probably be used for this purpose, as it can be used to directly manipulate the behavior of various modules. In the ideal case, a more general messaging format should work.

(11/15)

More work on integrating the connection registration system for the remote dispatcher module:

- The dispatch queue holds all registered connections, sorted by event category, and then events. This allows for lookup using the event category fired by the controller, so we don't need to do extra work to begin pulling up a list of potential event recipients.

- The dispatch queue is manipulated by the remote dispatch module, but is globally accessible through the controller class.

- The client-side connections may not always connect to the remote controller in one shot - therefore we add a crude wait-and-retry mechanism to the client handler.

- The client connections are dispatched from the remote dispatch module. This prevents some timing issues by guaranteeing that the dispatch module has started up before clients attempt to register with it.

End of week9 :Back to Logs.

(11/16)

The framework is probably developed to the point where it is good to test multiple instances against each other. There are several ways to do this, but, when working on a single machine, we need to have each instance read in a separate configuration file. A preliminary test setup involves a directory of configuration files, and a script to launch controller instances using the appropriate configuration files and stop them as necessary.

Discussions regarding benchmarks: A series of basic benchmarks are to be done. One of the most fundamental would be the overhead required for a flow insertion. Comparison with other platforms would come later, once this fundamental bottleneck is understood.

Future works: It is good to speculate on what can be made of this platform, given that it is a feasible one; One improvement to consider would be discovery-based startups, where the controllers do not need to be instructed who their neighbors are. This implies that advertisements are used to announce capabilities, and clients decide which services they require and choose based on the advertisements they hear on the wire. Connections can switch to dedicated stream once controllers choose their services.

Attachments (2)

- module_arch.png (85.4 KB ) - added by 14 years ago.

- controller_arch_rev-3.png (62.4 KB ) - added by 13 years ago.

Download all attachments as: .zip