[[TOC(Other/Summer/2023/RobotTestbed/*, depth=1, heading=Robotic IoT Smartspace Testbed)]]

= Robotic IoT !SmartSpace Testbed =

**WINLAB Summer Internship 2023**

**Group Members:** Katrina Celario^UG^, Matthew Grimalovsky^UG^, Jeremy Hui^UG^, Julia Rodriguez^UG^, Michael Cai^HS^, Laura Liu^HS^, Jose Rubio^HS^, Sonya Yuan Sun^GR^, Hedaya Walter^GR^

== Project Overview ==

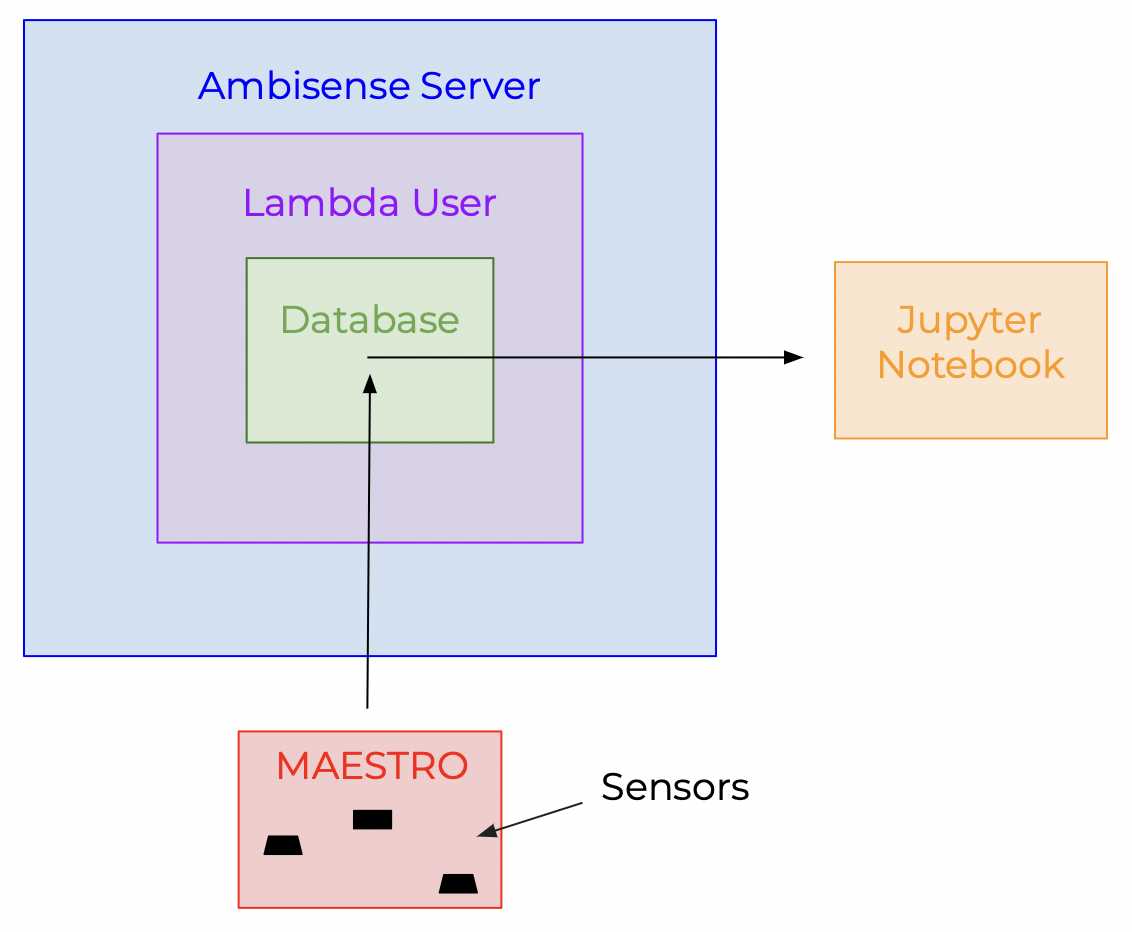

The main purpose of the project is to focus on the Internet of Things (IoT) and its transformative potential when intertwined with Machine Learning (ML). To explore this subject, the group continues the work of the '''''!SenseScape Testbed''''', an IoT experimentation platform for indoor environments containing a variety of sensors, location-tracking nodes, and robots. This testbed enables IoT applications, such as but not limited to, human activity and speech recognition and indoor mobility tracking. In addition, this project advocates for energy efficiency, occupant comfort, and context representation. The ''!SenseScape Testbed'' provides an adaptable environment for labeling and testing advanced ML algorithms centered around IoT.

=== Hardware ===

This project is centered on a specific piece of hardware referred to as a '''''MAESTRO''''', a custom multi-modal sensor designed by the previous group. The MAESTRO is capable of perceiving different types of data from its environment (listed below) and is connected to a Raspberry Pi, a microcomputer with the Raspberry Pi OS Lite (Legacy) installed. In addition, the group is leaning towards using a Raspberry Pi Camera Module for the camera.

[[Image(Maestro.jpg, 250px)]]

[[Image(Raspberry Pi 3 B+.jpeg, 250px)]]

[[Image(Raspberry_Pi_Camera_Module_3_2.jpeg, 250px)]]

The attachments on the MAESTRO include:

→'''ADXL345:''' measures acceleration experienced by the sensor

→'''BME680:''' measures temperature, humidity, pressure, and gas resistance

→'''TCS3472:''' measures and converts color and light intensity into digital values

→'''MXL90393:''' measures magnetic field in x, y, and z axis

→'''ZRE200GE:''' detects human presence or motion by sensing infrared radiation emitted by warm bodies

→'''MAX9814:'''amplifies audio signals captured by connected microphone

→'''NCS36000:''' detects and controls PIR sensor

→'''MCP3008:''' converts analog signals from sensor and converts it into a digital value that a computer can read

→'''LMV3xx:''' allows full voltage range of the power supply to be utilized

== Project Goals ==

'''Previous Group's Work''': **https://dl.acm.org/doi/abs/10.1145/3583120.3589838**

Based on the future research section, there are two main goals the group wants to accomplish.

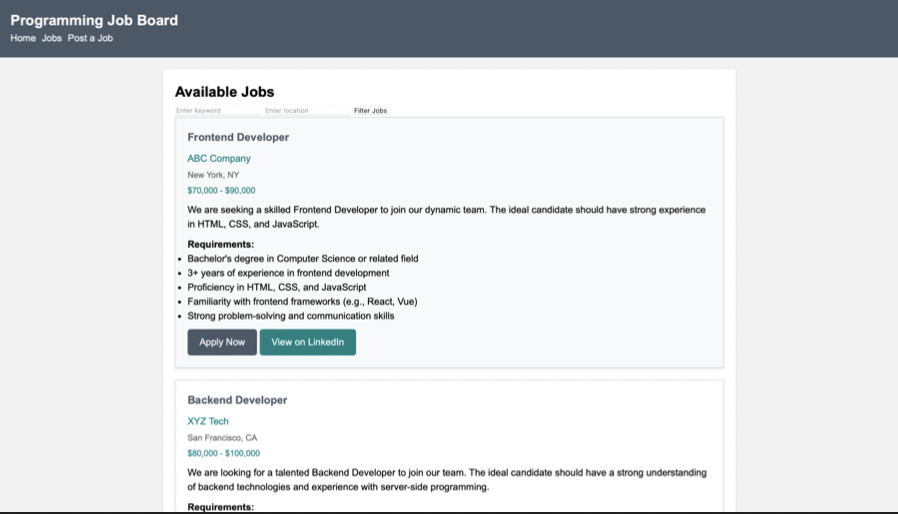

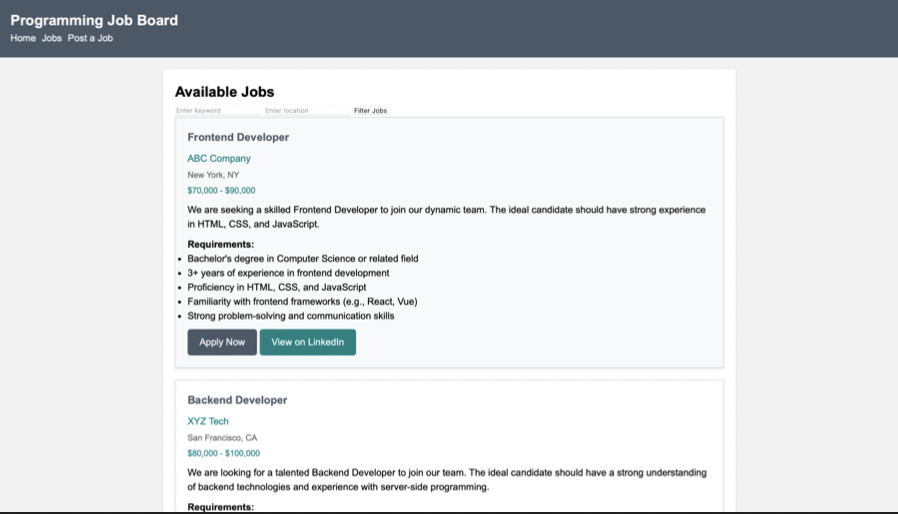

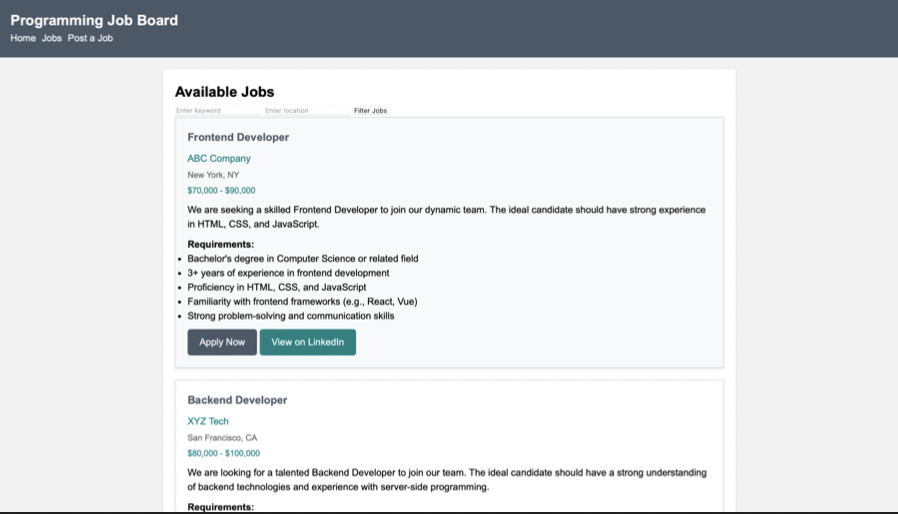

The first goal is to create a website that includes both real-time information on the sensors and a reservation system for remote access to the robot. For the sensors, the website should display the name of the sensor, whether it is online and the most recent time it was seen gathering data, and the actual continuous data streaming in. For the remote reservation/experimentation features, the website must be user-friendly so that not only is it easy for the user to execute commands, but also restricts them from changing things that they shouldn’t have access to. The group strives to allow remote access to the !LoCoBot through ROS (Robotic Operating System) and SSH (Secure Shell) as long as all machines involved are connected to the same network (VPN).

The second goal is automating the labeling process of the activity within the environment using the natural language descriptions of video data. The video auto-labeling can be done training neural networks (ex: CNN and LSTM) in an encoder-decoder architecture for both feature extraction and the language model. For activities that cannot be classified within a specific amount of certainty, the auto-labeling tool could save the time stamp and/or video clip and notify the user that it requires manual labeling. In the case the network is fully trained, it would simply choose the label with the highest probability and possibly mark that data as “uncertain”. The main goal is to connect this video data to the sensor data in hopes to bridge the gap between sensor-to-text.

=== The Projects's Three Phases ===

The progression of this project relies on three milestones, each with unique and specific goals. Moving forward, each phase is more advanced than the last.

'''Phase One:'''

For the first phase, the group is looking for the MAESTROs to recognize a predetermined set of activities in an office environment, in this case WINLAB. The plan is to set the MAESTROs in a grid like coordinate system, considering both the location of outlets and the "predetermined activities" that will be conducted. In addition to the MAESTROs, there will be multiple cameras in place capturing continuous video data of human activity. This video data will be used for the automatic labeling. Phase one is the foundation for the rest of the milestones moving forward.

'''Phase Two:'''

For the second phase, the group is looking for the MAESTROs to communicate with each other about what is happening in their immediate space using '''zero-shot''' or '''few-shot''' recognition.

''zero-shot:'' ability of a large language model to perform a task or generate responses for which it has not been explicitly trained.

''few-shot:'' ability of a large language model to recognize or classify new objects or categories which only a few labeled examples or shots.

'''Phase Three'''

For the third and final phase, the group is looking for the MAESTROs to communicate with each other to create a narrative of the activity in the given space. The model could be queried about the "memory" of the space and will give ranging descriptions based on the desired scope of the answer (1 hour vs 1 year). Seen in this phase, the large language model is the core of the project; however, the MAESTROs must be deployed first.

== Progress Overview ==

=== WEEK ONE ===

**[https://docs.google.com/presentation/d/1IYKcQdG27xSXlZqqyma7TG-OmXAVHhU-KHgzNXne9zE/edit?usp=sharing Week 1 Presentation]**

The main priority for Week One was getting familiar with the focused topics of the project. Since all the group members had little to no knowledge of these topics, a variety of relevant research papers were passed. These papers included topics such as the encoder-decoder architecture the large language model would be built upon and the research paper the previous group wrote regarding ''!SenseScape''.

In addition to reading, the group was introduced to the robot, which had the potential to be used a mobile sensor in the indoor testing environment. For a few days that week, the group met at the Computing Research & Education Building (CoRE), located on Rutgers- Busch Campus, in order to see and interact with the robot. To learn more about the way the robot worked, the group was able to set up ROS (Robotic Operating System) on Ubuntu distro and even ran elementary python scripts on robot following a talker/listener architecture.

=== WEEK TWO ===

**[https://docs.google.com/presentation/d/1QW5UYQB5ktRmmwNGVY43LUMOT2xoVWsmm7XsQQUSX9M/edit?usp=sharing Week 2 Presentation]**

For Week Two, the group worked on starting the website designing process by first brushing up on HTML (!HyperText Markup Language), a markup language a few of the members had previous experience with. To do so, this included watching tutorials, practicing on Code Academy, and even creating a simple website.

{{{#!html

}}}

In addition, the group took the time to learn about the Raspberry Pi and how it worked. By starting out with a blank SD card, a few members installed the operating system (Raspberry Pi OS Lite - Legacy), connected the Pi to a VPN, and downloaded packages that were needed to run the data collecting python scripts (that previous group wrote) or packages that could possibly be used for future use. Doing so, allowed the group to start understanding the previous work done.

=== WEEK THREE ===

**[https://docs.google.com/presentation/d/1DBRkGEgmIhbs65g9_E-yGuri4jHLU6_4XQ8te07zc_M/edit?usp=sharing Week 3 Presentation]**

For week three, the group split up and worked on a variety of aspects for the project.

One task that was done was meeting with a previous graduate student to understand the scripts written and how each Pi was connected to the professor's server. It was revealed that the previous group used '''Balena Ether''', a specific software used to "clone" the data of the "working pi" (python scripts, packages, and ROS) onto blank SD cards for the other Pi's. After attaining this knowledge, the group worked on cloning the SD cards for the remaining 25 Pi's. Each Pi was then checked to see if they could run the scripts and given a unique name, which would be important to the data collecting process.

{{{#!html

}}}

In addition, the group took the time to learn about the Raspberry Pi and how it worked. By starting out with a blank SD card, a few members installed the operating system (Raspberry Pi OS Lite - Legacy), connected the Pi to a VPN, and downloaded packages that were needed to run the data collecting python scripts (that previous group wrote) or packages that could possibly be used for future use. Doing so, allowed the group to start understanding the previous work done.

=== WEEK THREE ===

**[https://docs.google.com/presentation/d/1DBRkGEgmIhbs65g9_E-yGuri4jHLU6_4XQ8te07zc_M/edit?usp=sharing Week 3 Presentation]**

For week three, the group split up and worked on a variety of aspects for the project.

One task that was done was meeting with a previous graduate student to understand the scripts written and how each Pi was connected to the professor's server. It was revealed that the previous group used '''Balena Ether''', a specific software used to "clone" the data of the "working pi" (python scripts, packages, and ROS) onto blank SD cards for the other Pi's. After attaining this knowledge, the group worked on cloning the SD cards for the remaining 25 Pi's. Each Pi was then checked to see if they could run the scripts and given a unique name, which would be important to the data collecting process.

{{{#!html

}}}

Another task that was worked on was the website. There was significant progress made on the website including the overall website design and a calendar displaying the availability of the robot. In addition, a text box allowing the user to insert their code to control the robot remotely was also made. All there is left for the website is to add functionality.

{{{#!html

}}}

Another task that was worked on was the website. There was significant progress made on the website including the overall website design and a calendar displaying the availability of the robot. In addition, a text box allowing the user to insert their code to control the robot remotely was also made. All there is left for the website is to add functionality.

{{{#!html

}}}

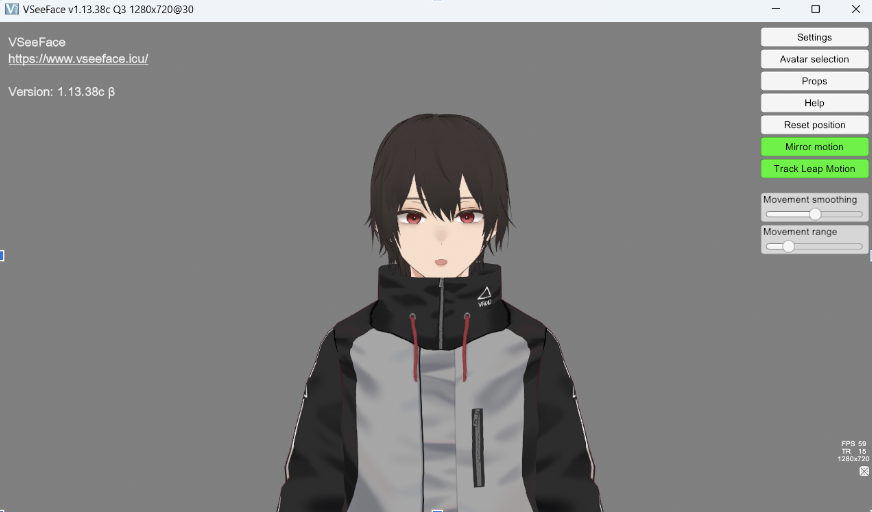

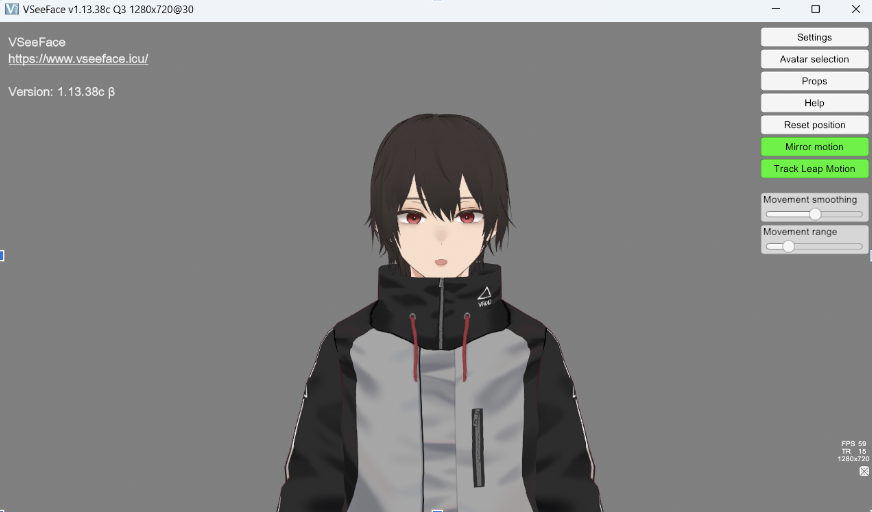

Lastly, the group explored the possibility of including Unity in the project. One of the graduate students presented the idea of using the sensor data to create a "digital twin" of the environment; therefore, some group members did further research. The group was able to created a 3D avatar in Unity which mimics live webcam feed.

{{{#!html

}}}

Lastly, the group explored the possibility of including Unity in the project. One of the graduate students presented the idea of using the sensor data to create a "digital twin" of the environment; therefore, some group members did further research. The group was able to created a 3D avatar in Unity which mimics live webcam feed.

{{{#!html

}}}

=== WEEK FOUR ===

**[https://docs.google.com/presentation/d/1OhPvm1Z-3h6TYjOG4JKLdBG7Uzx1W5DT9fK2EF5VkTc/edit?usp=sharing Week 4 Presentation]**

For Week Four, the group again spent the time splitting up tasks.

The first task done was adding functionality to the website; this included adding the backend/appointment system. By doing so, the user is able to submit their code using the website. The website then sends an email to the professor, including their name, appointment time, and the code they wish to conduct. It is up to professor to allow them permission.

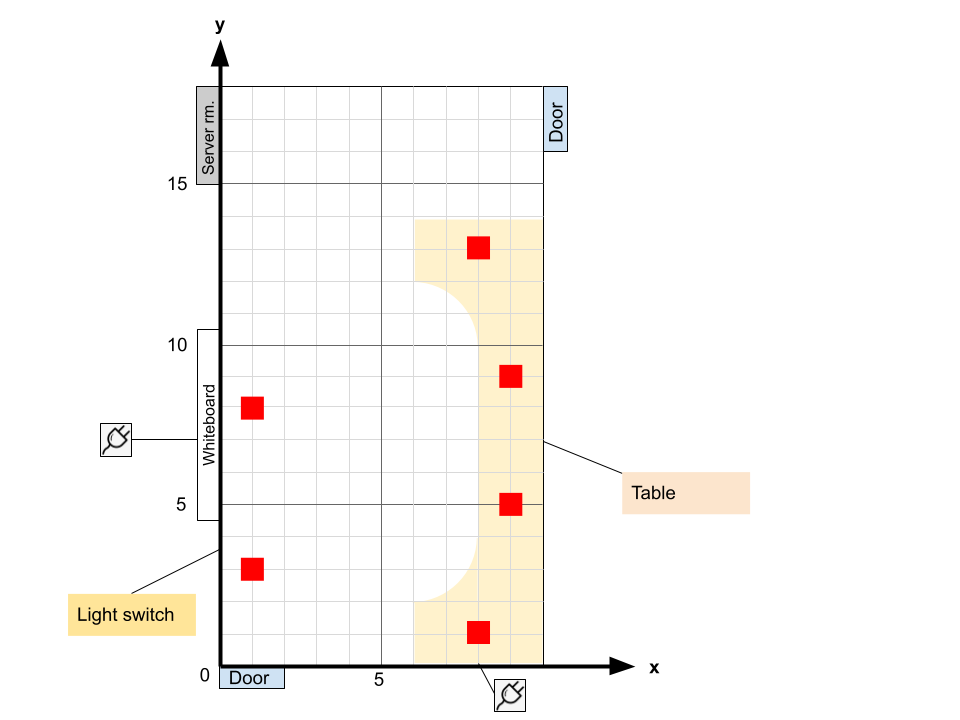

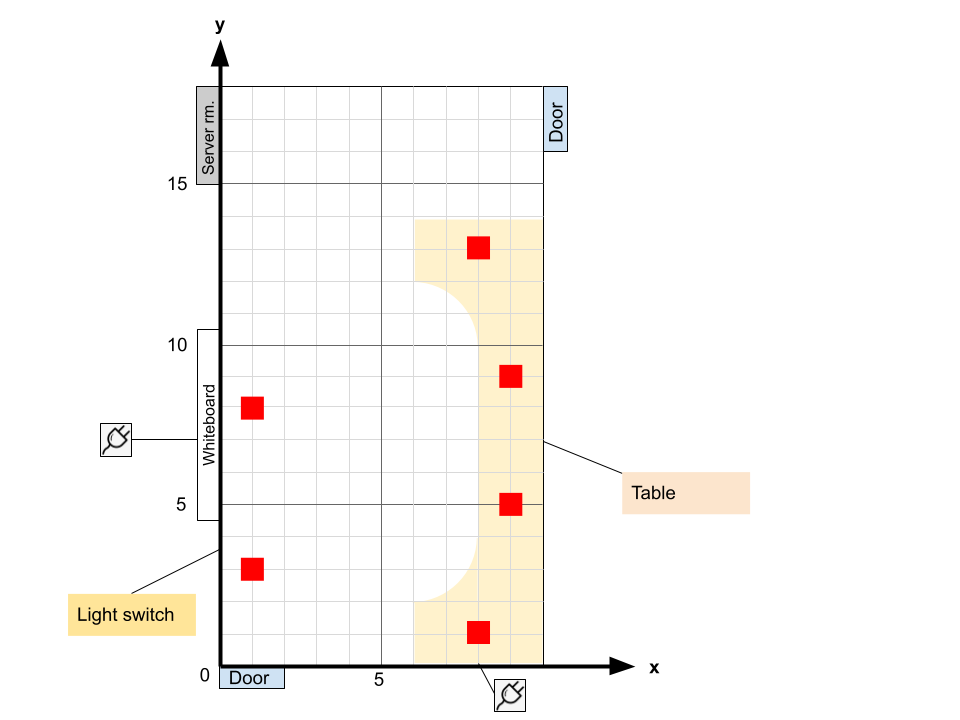

Another task done was measuring the dimensions of WINLAB. A majority of the group spent the time measuring each room in order to create the coordinate system for the MAESTROs.

{{{#!html

}}}

=== WEEK FOUR ===

**[https://docs.google.com/presentation/d/1OhPvm1Z-3h6TYjOG4JKLdBG7Uzx1W5DT9fK2EF5VkTc/edit?usp=sharing Week 4 Presentation]**

For Week Four, the group again spent the time splitting up tasks.

The first task done was adding functionality to the website; this included adding the backend/appointment system. By doing so, the user is able to submit their code using the website. The website then sends an email to the professor, including their name, appointment time, and the code they wish to conduct. It is up to professor to allow them permission.

Another task done was measuring the dimensions of WINLAB. A majority of the group spent the time measuring each room in order to create the coordinate system for the MAESTROs.

{{{#!html

.png) }}}

Lastly, the group continued to explore the integration of Unity by creating a VR environment for possible future use.

=== WEEK FIVE ===

**[https://docs.google.com/presentation/d/1c5EtgELOf6aDRE73iHUt2_Uq74TGIWMUXo0xngeD5ac/edit?usp=sharing Week 5 Presentation]**

Week Five started off with a meeting with the professor about what the group needed the Raspberry Pis to do. To work towards his objectives, the group automated the process of connecting the Pis to wifi as well checking the connection by pinging Google. This was done by writing python scripts and adding them to the rc.local file located on the Pi, which runs the scripts every time the Pi reboots. In addition, the group studied creating a tunnel between the Raspberry Pi and the professor's server since they are on two different ip addresses; however, it was found that the Pis already contained a script which created that tunnel. Lastly, the foundation of the interactive grid was added to the website. This grid would display real time information about the MAESTROs, including their name, online status, the last time data was collected, and the continuous streaming data coming in.

=== WEEK SIX ===

**[https://docs.google.com/presentation/d/1ArsCSh_88Yh-_FCZacON2jW-a4Mv5AKRFH3L4P8xQlQ/edit?usp=sharing Week 6 Presentation]**

For Week Six, research was done on the topic of Precision Time Protocol (PTP). This is a protocol used to synchronize clocks throughout a computer network, in the group's case, individual Raspberry Pis with either sensors or cameras attached. This is a crucial factor for the project since the heart of the data collection process relies on connecting the sensor data to the camera input.

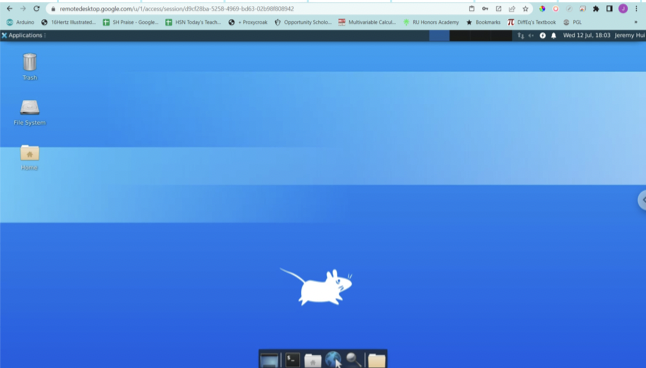

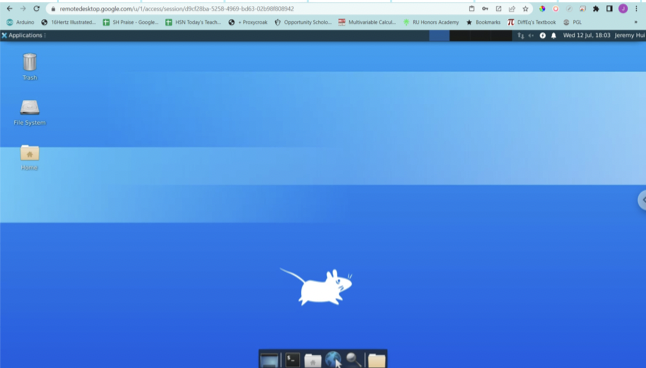

In addition, the group continued to look into utilizing Unity by creating an environment where Unity and ROS could connect. The first step was setting up a remote desktop on the orbit node at WINLAB. The end goal is to hopefully create a demo which integrates SLAM (Simultaneous Localization And Mapping). SLAM describes a robot attaining a certain degree of environmental awareness through the use of external sensors - and is one of the most studied areas in autonomous mobile robotics.

{{{#!html

}}}

Lastly, the group continued to explore the integration of Unity by creating a VR environment for possible future use.

=== WEEK FIVE ===

**[https://docs.google.com/presentation/d/1c5EtgELOf6aDRE73iHUt2_Uq74TGIWMUXo0xngeD5ac/edit?usp=sharing Week 5 Presentation]**

Week Five started off with a meeting with the professor about what the group needed the Raspberry Pis to do. To work towards his objectives, the group automated the process of connecting the Pis to wifi as well checking the connection by pinging Google. This was done by writing python scripts and adding them to the rc.local file located on the Pi, which runs the scripts every time the Pi reboots. In addition, the group studied creating a tunnel between the Raspberry Pi and the professor's server since they are on two different ip addresses; however, it was found that the Pis already contained a script which created that tunnel. Lastly, the foundation of the interactive grid was added to the website. This grid would display real time information about the MAESTROs, including their name, online status, the last time data was collected, and the continuous streaming data coming in.

=== WEEK SIX ===

**[https://docs.google.com/presentation/d/1ArsCSh_88Yh-_FCZacON2jW-a4Mv5AKRFH3L4P8xQlQ/edit?usp=sharing Week 6 Presentation]**

For Week Six, research was done on the topic of Precision Time Protocol (PTP). This is a protocol used to synchronize clocks throughout a computer network, in the group's case, individual Raspberry Pis with either sensors or cameras attached. This is a crucial factor for the project since the heart of the data collection process relies on connecting the sensor data to the camera input.

In addition, the group continued to look into utilizing Unity by creating an environment where Unity and ROS could connect. The first step was setting up a remote desktop on the orbit node at WINLAB. The end goal is to hopefully create a demo which integrates SLAM (Simultaneous Localization And Mapping). SLAM describes a robot attaining a certain degree of environmental awareness through the use of external sensors - and is one of the most studied areas in autonomous mobile robotics.

{{{#!html

}}}

=== WEEK SEVEN ===

**[https://docs.google.com/presentation/d/1p9R_rovMCXBW7BP6RejAyDSZmvj82NZ5OZdMKCCgQeA/edit?usp=sharing Week 7 Presentation]**

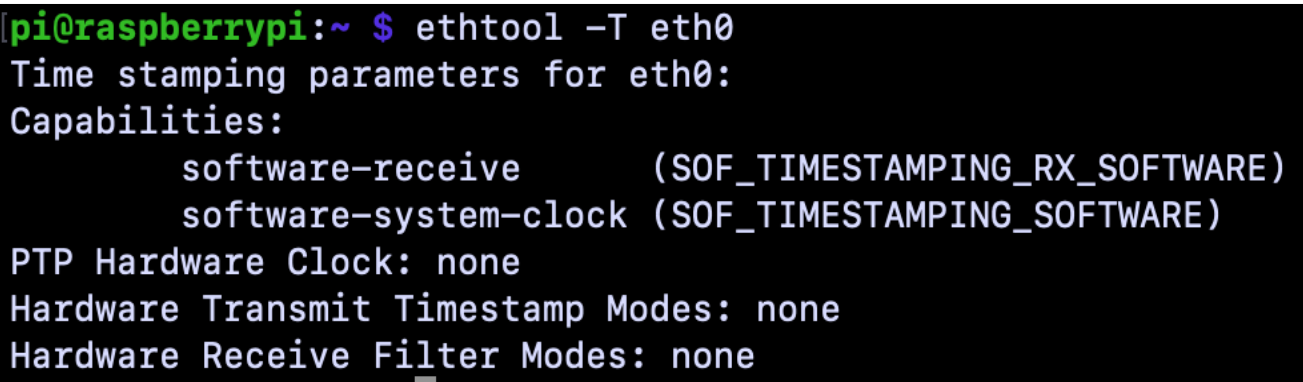

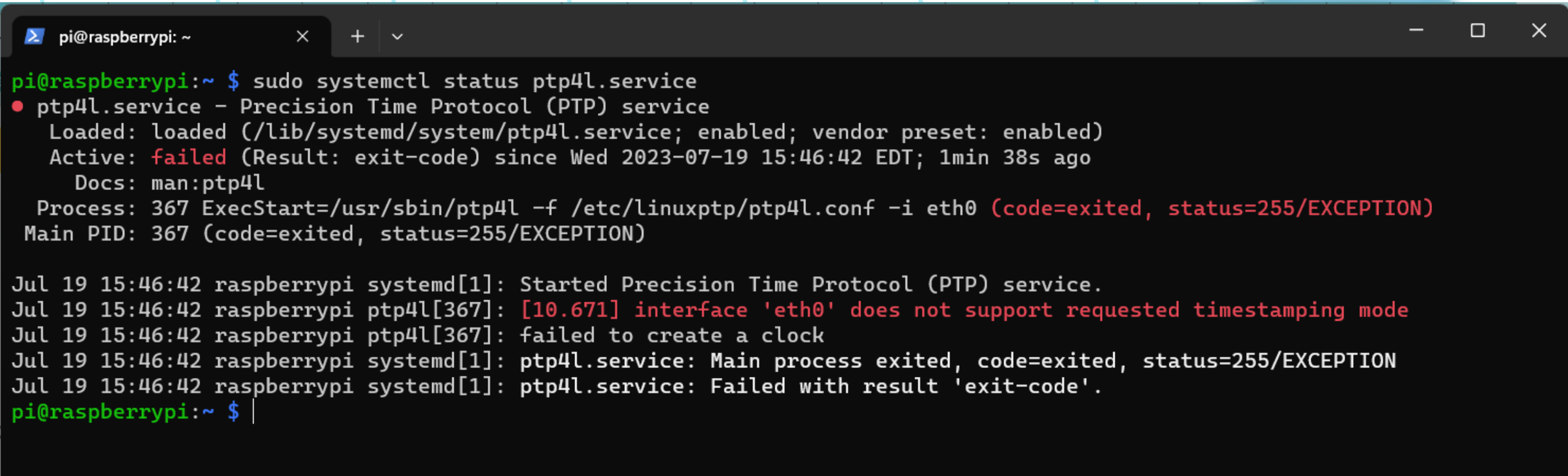

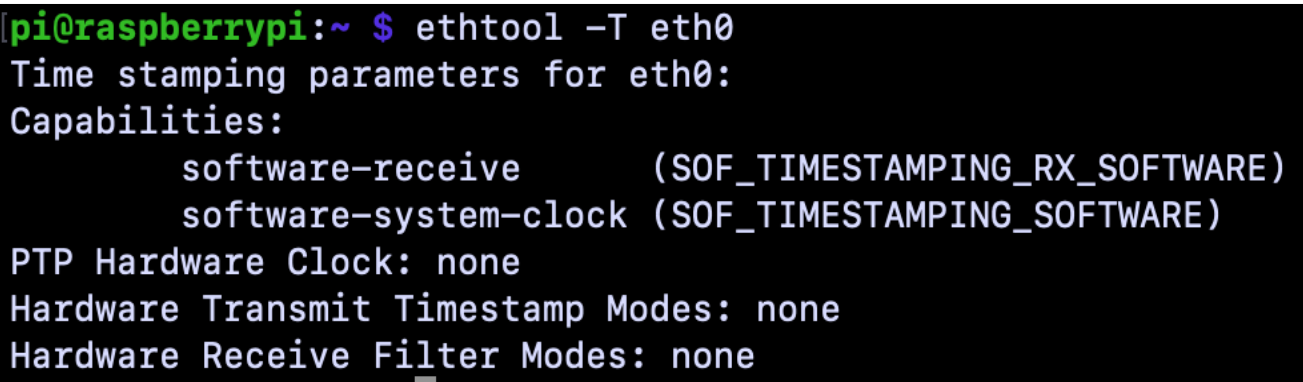

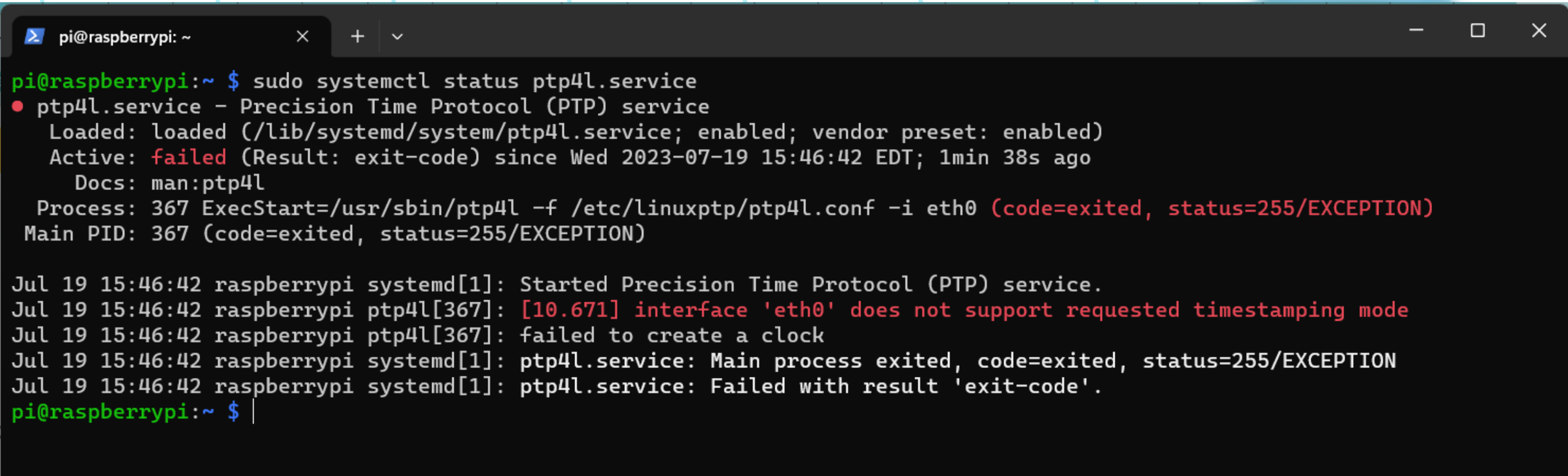

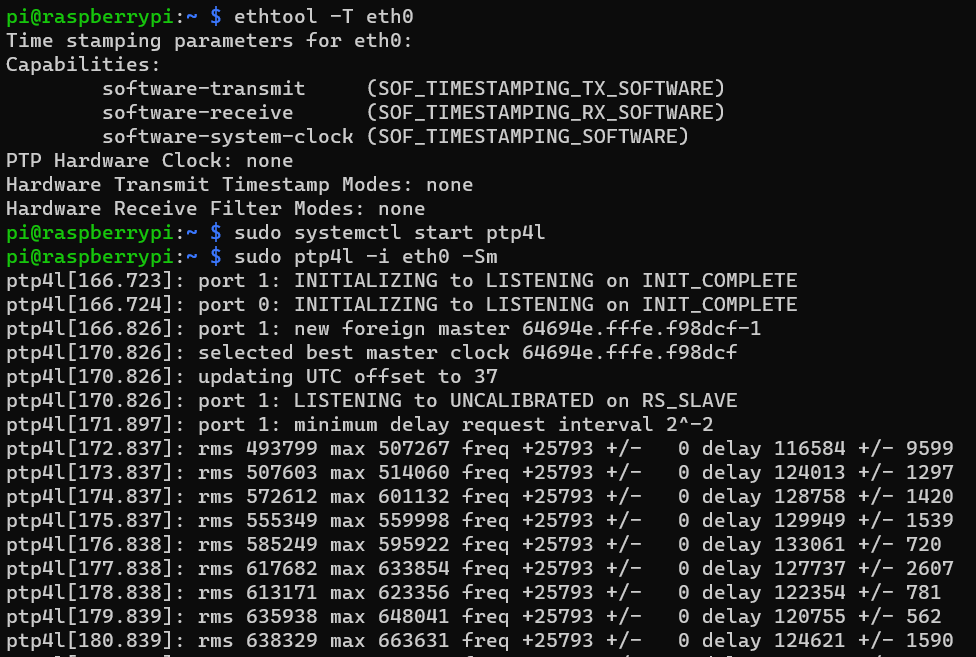

Starting Week Seven, the group attempted to connect a Raspberry Pi to PTP. It was found that the Raspberry Pi Model 3B+ did not have IEEE1588, the protocol which enables PTP, and had no hardware timestamping feature. This meant PTP had to be achieved through software emulation, by aiming the Raspberry Pi at a boundary server. To start, the group walked through the following Github: https://github.com/twteamware/raspberrypi-ptp

After following all the steps on a Pi containing the data collecting scripts, the PTP software was not successful, even with the mentioned patches being reviewed. This was a problem that would be furthered reviewed.

In addition to PTP, the group started to work on using the Raspberry Pi Camera Module, which WINLAB provided. The group was able to successfully capture videos and view them using VLC, a free and open-source media player.

{{{#!html

}}}

=== WEEK SEVEN ===

**[https://docs.google.com/presentation/d/1p9R_rovMCXBW7BP6RejAyDSZmvj82NZ5OZdMKCCgQeA/edit?usp=sharing Week 7 Presentation]**

Starting Week Seven, the group attempted to connect a Raspberry Pi to PTP. It was found that the Raspberry Pi Model 3B+ did not have IEEE1588, the protocol which enables PTP, and had no hardware timestamping feature. This meant PTP had to be achieved through software emulation, by aiming the Raspberry Pi at a boundary server. To start, the group walked through the following Github: https://github.com/twteamware/raspberrypi-ptp

After following all the steps on a Pi containing the data collecting scripts, the PTP software was not successful, even with the mentioned patches being reviewed. This was a problem that would be furthered reviewed.

In addition to PTP, the group started to work on using the Raspberry Pi Camera Module, which WINLAB provided. The group was able to successfully capture videos and view them using VLC, a free and open-source media player.

{{{#!html

}}}

Lastly, the group created the coordinate system for the six Raspberry Pis being placed in the test room. The placement of the Pis depended on the predetermined activities that the group wanted to conduct, such as turning on/off the light and walking in/out of the room. The location of the outlets in the room was also acknowledged.

{{{#!html

}}}

Lastly, the group created the coordinate system for the six Raspberry Pis being placed in the test room. The placement of the Pis depended on the predetermined activities that the group wanted to conduct, such as turning on/off the light and walking in/out of the room. The location of the outlets in the room was also acknowledged.

{{{#!html

}}}

=== WEEK EIGHT ===

**[https://docs.google.com/presentation/d/16K1NKxf1Q3Zdgpvkc-PZixC9skILkxyMGKd98GZ9kKY/edit?usp=sharing Week 8 Presentation]**

For Week Eight, the group continued working on the Raspberry Pi in hopes to get PTP to run. In addition, as a backup plan, the group looked into buying specific hardware that would allow the PTP function without having to use software emulation. After some research, the hardware being leaned towards is the Compute Module 4 IO Board and the CM4 chip. It was looked into that the hardware method can also achieve better accuracy compared to software.

{{{#!html

}}}

=== WEEK EIGHT ===

**[https://docs.google.com/presentation/d/16K1NKxf1Q3Zdgpvkc-PZixC9skILkxyMGKd98GZ9kKY/edit?usp=sharing Week 8 Presentation]**

For Week Eight, the group continued working on the Raspberry Pi in hopes to get PTP to run. In addition, as a backup plan, the group looked into buying specific hardware that would allow the PTP function without having to use software emulation. After some research, the hardware being leaned towards is the Compute Module 4 IO Board and the CM4 chip. It was looked into that the hardware method can also achieve better accuracy compared to software.

{{{#!html

}}}

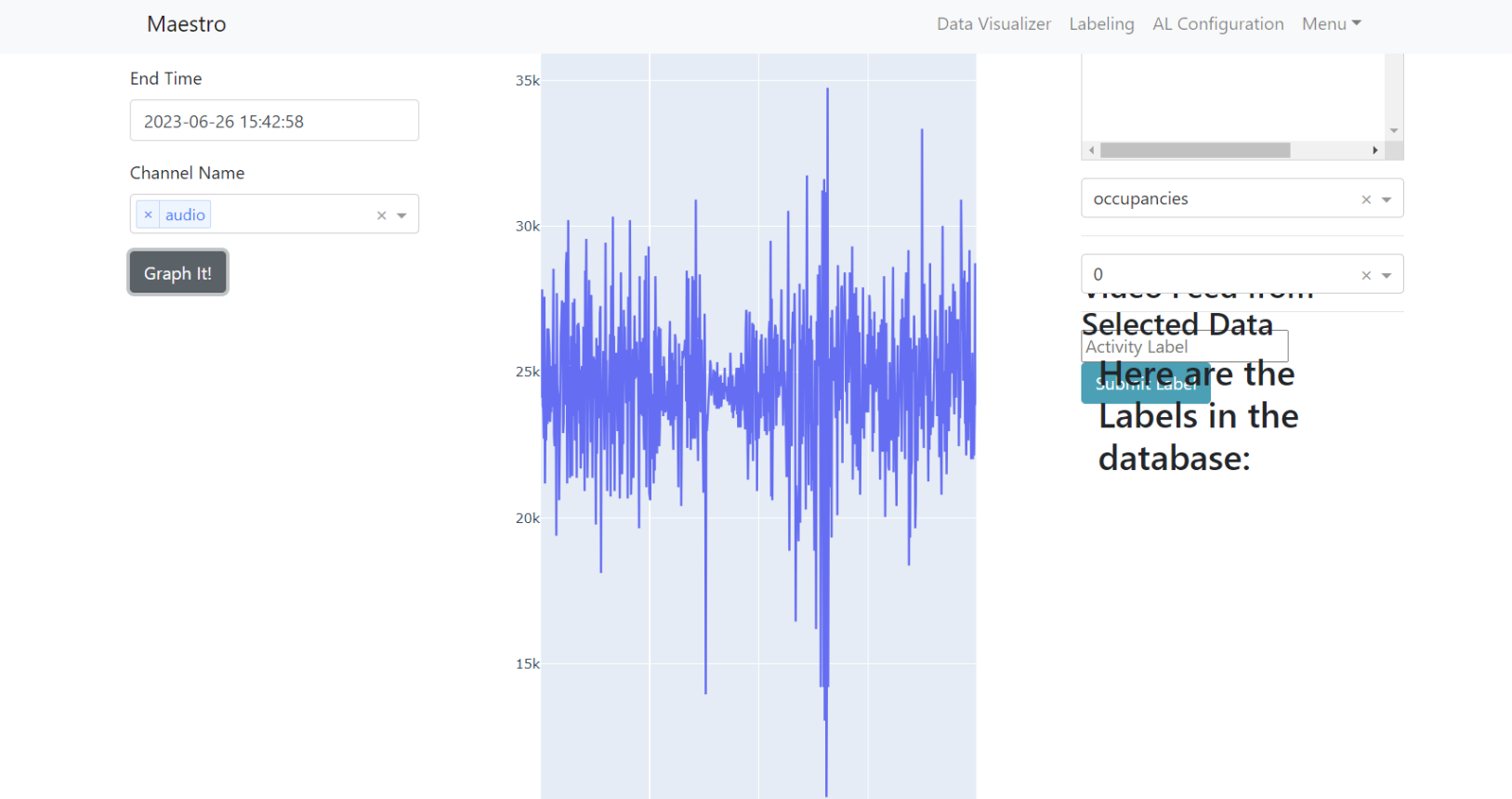

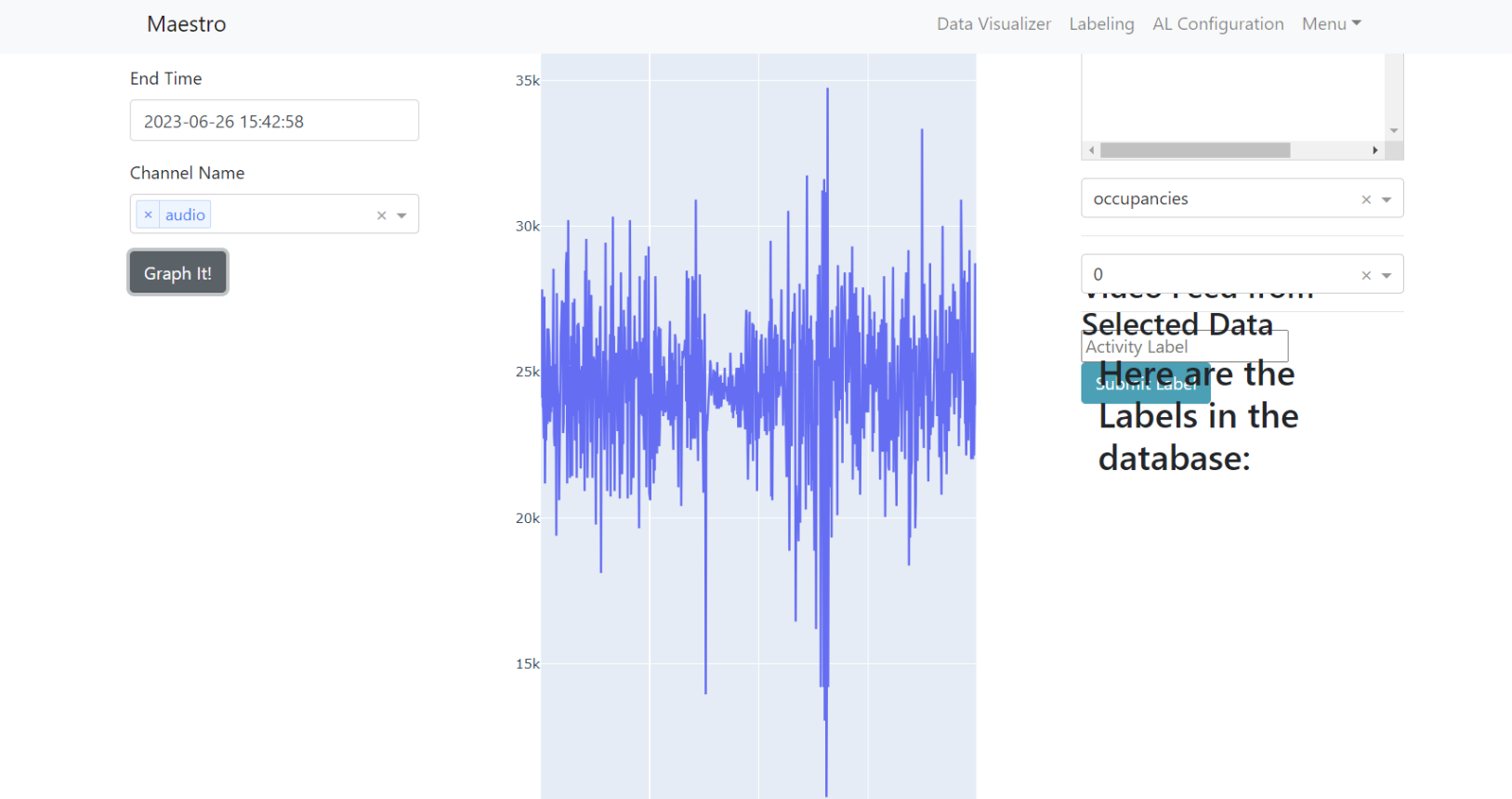

Additionally, the group was able to breakdown and comprehend the past code written by the previous research members. It was found that the Web App created used '''Dash by Plotly''' to easily visualize and label the MAESTRO data.

{{{#!html

}}}

Additionally, the group was able to breakdown and comprehend the past code written by the previous research members. It was found that the Web App created used '''Dash by Plotly''' to easily visualize and label the MAESTRO data.

{{{#!html

}}}

Furthermore, the group continued exploring ROS/Unity through a ROS Point Cloud Visualizer. The point cloud is generated using LIDAR and the data is imported using the PCX library in Unity. With Unity and a first person camera, the room can be interacted with and explored. The goal in the future is for the LIDAR data to be sent in real time using '''ROS rvis''' (a 3D visualization tool).

'''(insert point demo here)'''

=== WEEK NINE ===

**[https://docs.google.com/presentation/d/12ijLg5qRsAsmfym1PZD_P5UunhlGmImwuT9IXOhOK-8/edit?usp=sharing Week 9 Presentation]**

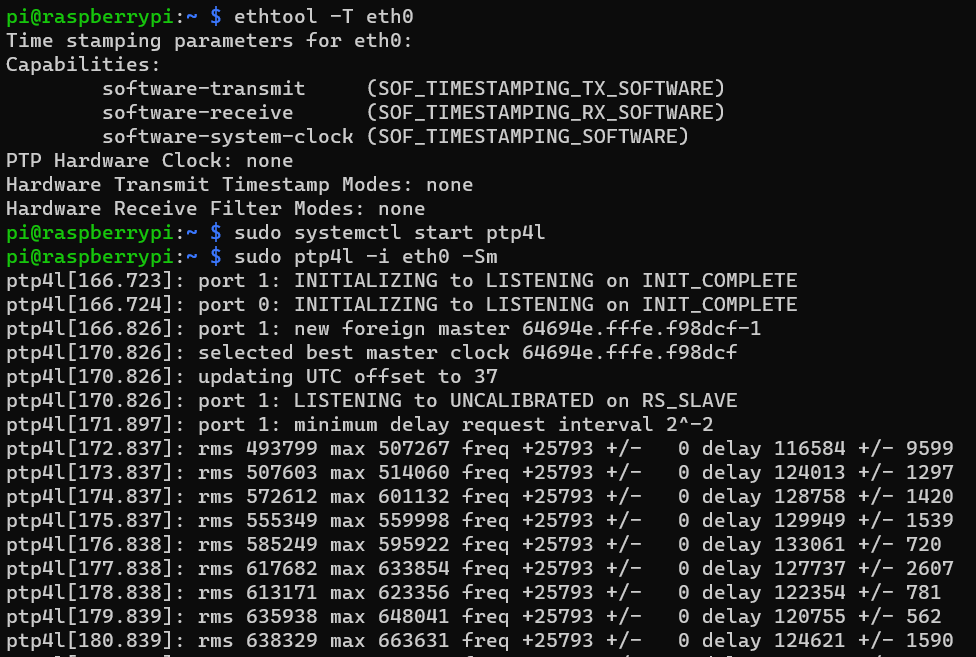

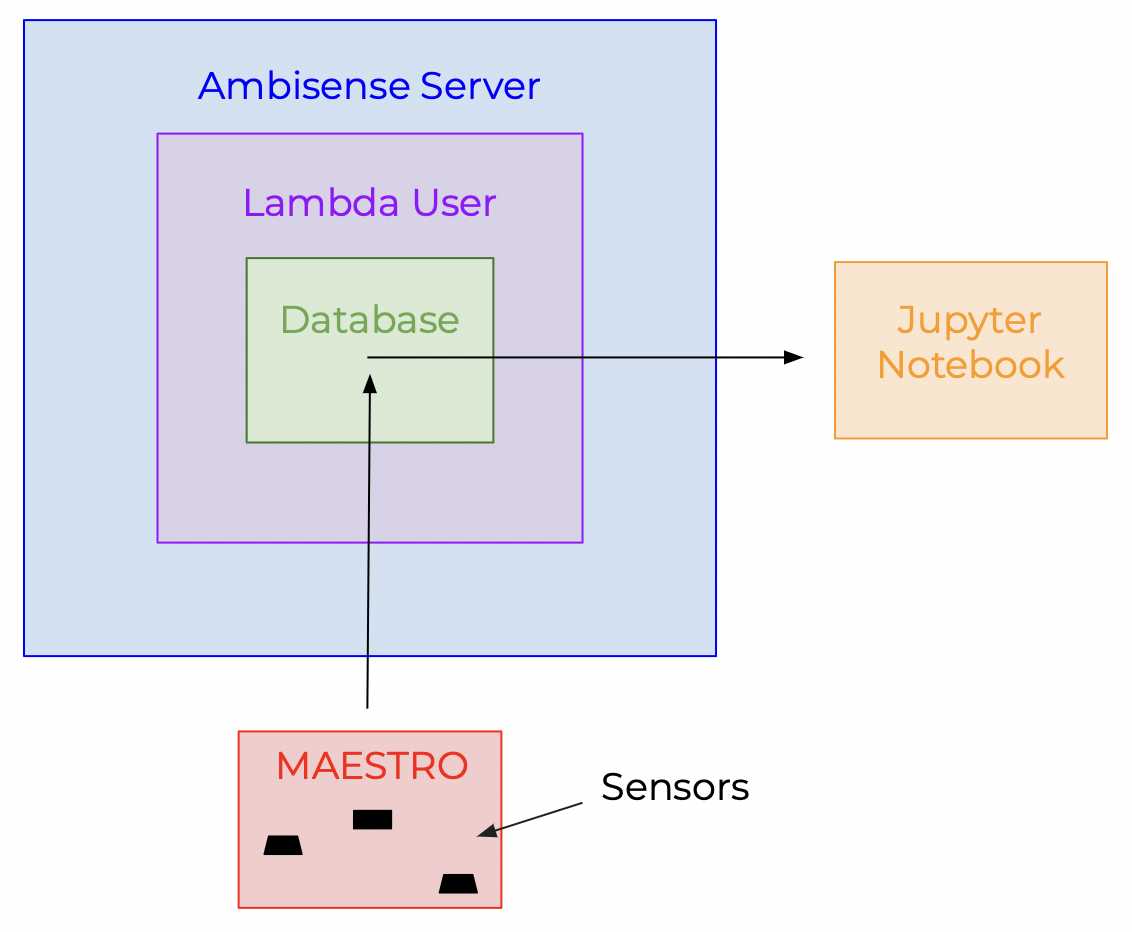

Starting Week 9, by using a blank Raspberry Pi with just the operating system installed, the group was successful in getting PTP to work. As seen, the "software-transmit" capability is now added. The group was also able to merge the "smartbox" folder with the PTP Pi; therefore, the MAESTROS are now able to send the sensor data to the database while having the capability of PTP.

{{{#!html

}}}

Furthermore, the group continued exploring ROS/Unity through a ROS Point Cloud Visualizer. The point cloud is generated using LIDAR and the data is imported using the PCX library in Unity. With Unity and a first person camera, the room can be interacted with and explored. The goal in the future is for the LIDAR data to be sent in real time using '''ROS rvis''' (a 3D visualization tool).

'''(insert point demo here)'''

=== WEEK NINE ===

**[https://docs.google.com/presentation/d/12ijLg5qRsAsmfym1PZD_P5UunhlGmImwuT9IXOhOK-8/edit?usp=sharing Week 9 Presentation]**

Starting Week 9, by using a blank Raspberry Pi with just the operating system installed, the group was successful in getting PTP to work. As seen, the "software-transmit" capability is now added. The group was also able to merge the "smartbox" folder with the PTP Pi; therefore, the MAESTROS are now able to send the sensor data to the database while having the capability of PTP.

{{{#!html

}}}

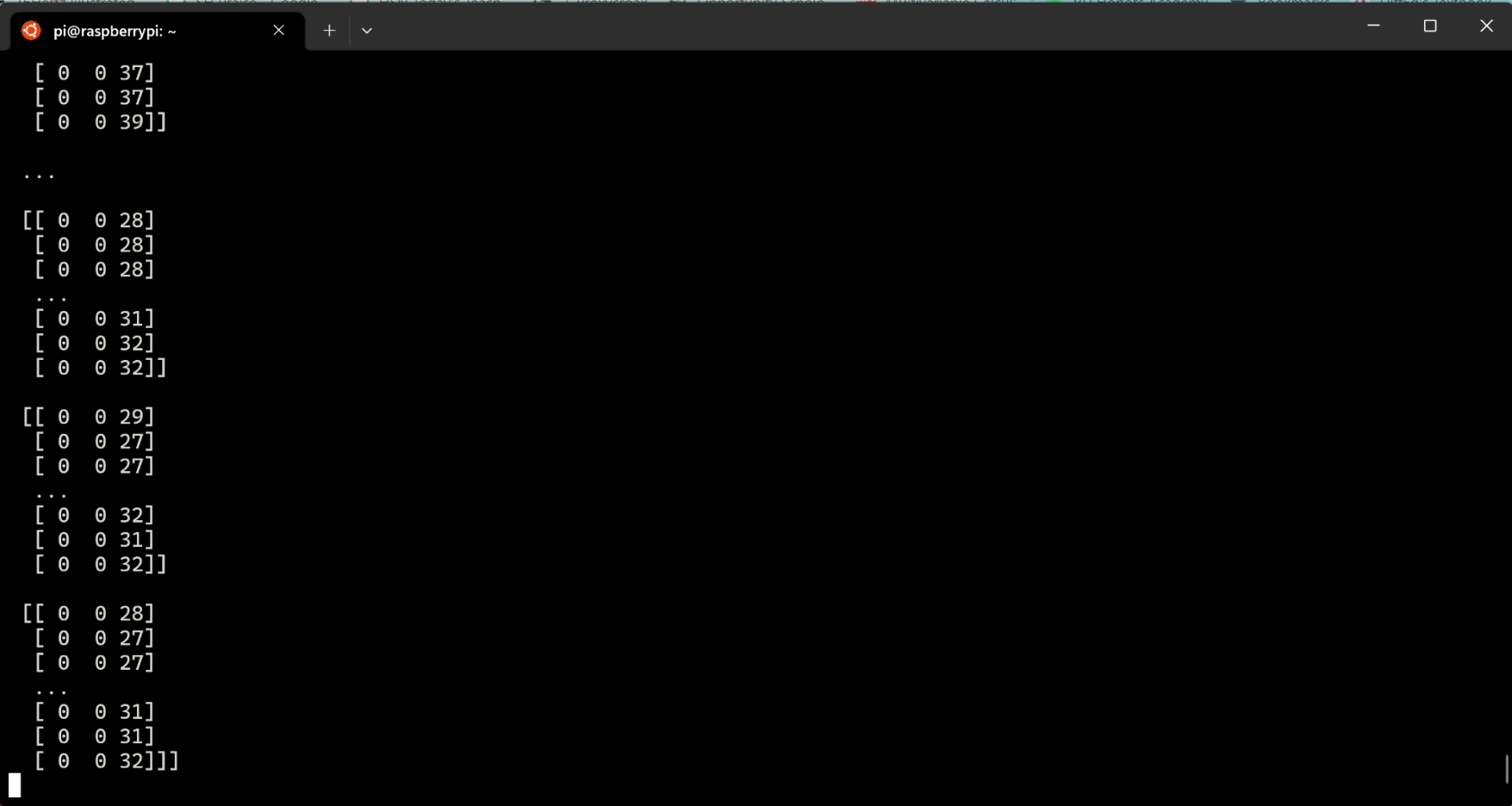

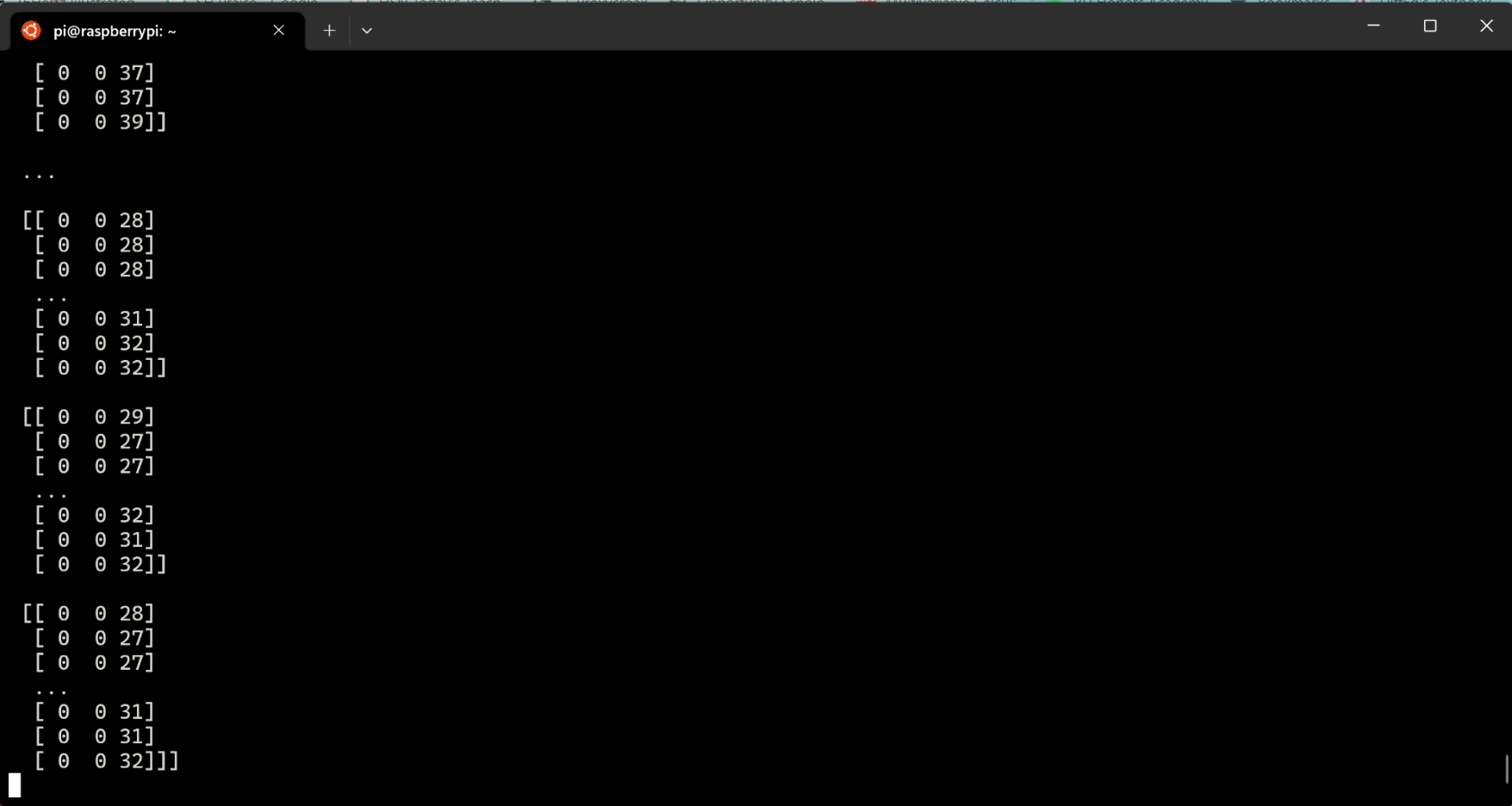

Moreover, the group was able to get the camera data to be sent to the data base in real-time. This data can then be analyzed using the Jupyter Notebook.

{{{#!html

}}}

Moreover, the group was able to get the camera data to be sent to the data base in real-time. This data can then be analyzed using the Jupyter Notebook.

{{{#!html

}}}

Finally, the group got to cloning the "perfect" SD card for the 6 Raspberry Pi's being used in the experiment.

=== WEEK TEN ===

**[https://docs.google.com/presentation/d/1cuRq7wwAi_VLquOAnOBlh81yTnIRZ29ZyBd3wXOo2Cc/edit?usp=sharing Week 10 Presentation]**

The group looked at ethernet switches to purchase with PTP support while being within the budget.

=== Summary ===

After ten weeks, the group was successful in creating a functional website, designing a coordinate system, automating wifi processes, and installing PTP for the Raspberry Pi’s, and explored the possible use of Unity/ROS.

=== Future Work ===

For the future, the group is looking to modify certain aspects of the project as well as conducting experiments for the large language model.

First, the group is planning to purchase hardware for PTP. This focuses on the TimeCard mini Platinum Edition from OCP-TAP. Although the group got software emulation to work, the hope is to achieve higher accuracy using this hardware setup. After choosing which method is better, the group plans on setting up the MAESTROs and cameras in the coordinate system. Hopefully, data collection can start in the near future.

''' insert TimeCard mini '''

After gathering enough sensor and camera data, the training of the neural network can been. The goal is to label sensor activity using natural language descriptions of video data. What the group group hopes this project is able to achieve is to bridge gap between sensor-to-text.

}}}

Finally, the group got to cloning the "perfect" SD card for the 6 Raspberry Pi's being used in the experiment.

=== WEEK TEN ===

**[https://docs.google.com/presentation/d/1cuRq7wwAi_VLquOAnOBlh81yTnIRZ29ZyBd3wXOo2Cc/edit?usp=sharing Week 10 Presentation]**

The group looked at ethernet switches to purchase with PTP support while being within the budget.

=== Summary ===

After ten weeks, the group was successful in creating a functional website, designing a coordinate system, automating wifi processes, and installing PTP for the Raspberry Pi’s, and explored the possible use of Unity/ROS.

=== Future Work ===

For the future, the group is looking to modify certain aspects of the project as well as conducting experiments for the large language model.

First, the group is planning to purchase hardware for PTP. This focuses on the TimeCard mini Platinum Edition from OCP-TAP. Although the group got software emulation to work, the hope is to achieve higher accuracy using this hardware setup. After choosing which method is better, the group plans on setting up the MAESTROs and cameras in the coordinate system. Hopefully, data collection can start in the near future.

''' insert TimeCard mini '''

After gathering enough sensor and camera data, the training of the neural network can been. The goal is to label sensor activity using natural language descriptions of video data. What the group group hopes this project is able to achieve is to bridge gap between sensor-to-text.

.png)