| Version 58 (modified by , 22 months ago) ( diff ) |

|---|

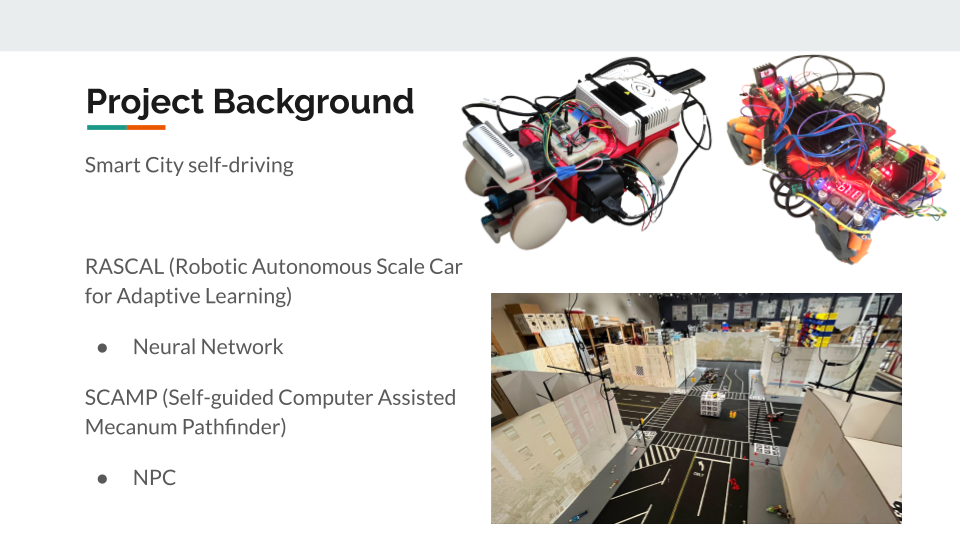

Self-Driving Vehicular Project

Team: Aaron Cruz [UG], Arya Shetty [UG], Brandon Cheng [UG], Tommy Chu [UG], Erik Nießen [HS], Siddarth Malhotra [HS]

Advisors: Ivan Seskar and Jennifer Shane

Project Description & Goals:

Build and train miniature autonomous cars to drive in a miniature city.

RASCAL (Robotic Autonomous Scale Car for Adaptive Learning):

- Using the car sensors, offload image and control data onto a cloud server.

- This server will train a neural network for our vehicle to use in order to drive autonomously given camera image data.

Technologies: ROS (Robot Operating System), Pytorch

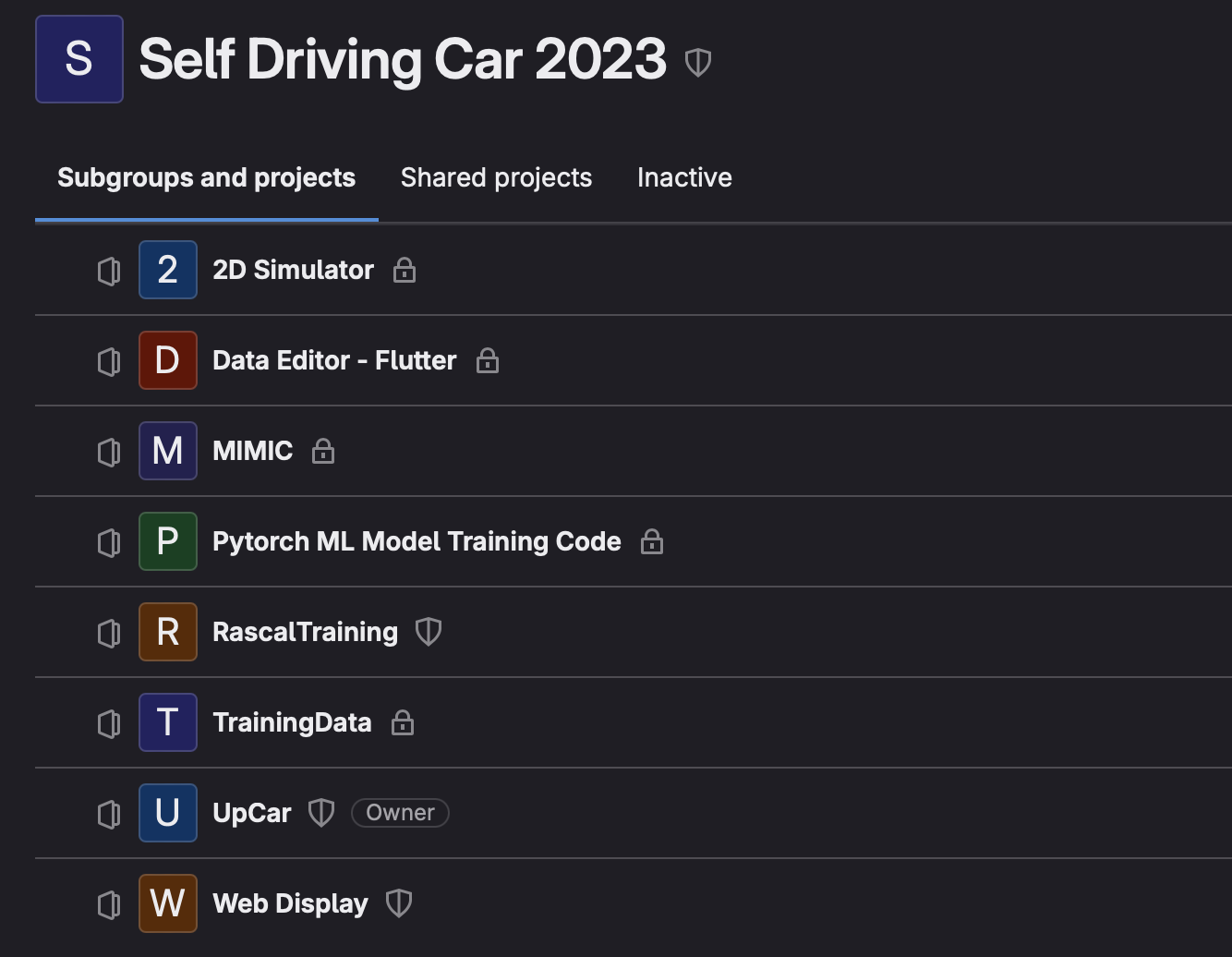

https://gitlab.orbit-lab.org/self-driving-car-2023/

Week 1: 5/28 - 5/30

Progress:

- Got familiarized with past summer's work:GitLab, RASCAL setup, Poster

- Debugged issue with RASCAL's pure pursuit

Week 2: 6/3 - 6/6

Progress:

- Setup X11 forwarding for GUI applications through SSH

- Visual odometry using Realsense Camera and rtabmap

- Streamlined data pipeline that processes bag data (car camera + control data) into .mp4 video

- Detected ARUCO markers from a given image using Python & OpenCV libraries

- Setup Intersection server (node with GPU)

- Developed PyTorch MNIST model

- Trained "yellow thing" neural network

- Line up perspective drawing with camera to determine FOV

Week 3: 6/10 - 6/13

Progress:

- Created web display assassin to eliminate web server when closing ROS

- Tested "yellow thing" model, great results

- SSHFS setup

- Calibrated Realsense camera

- Created "snap picture" button on web display for convenience

- Developed python script to detect ARUCO marker and estimate camera position

- Tested point cloud mapping with rtabmap

- Attempt sensor fusion with encoder odometry and visual odometry

- Data augmentation to artificially generate new camera perspectives from existing images

Week 4: 6/17 - 6/20

Progress:

- Refined aruco marker detection for more accurate car pose estimation

- Trained model with video instead of images

- Improved data pipeline from car sensors to server

- Refined data augmentation to simulate new camera perspectives

- Added more data visualization (Replayer) to display steering curve, path, and images to web server

Week 5: 6/24 - 6/27

Progress:

- Aruco Marker Detection now updates car position within XY plane. Finished self-calibration system

- Addressed normalization and cropping problems

- Introduced Grad-CAM heat map

- Resolved Python version mismatch issue

- Visualized training data bias through histogram

- Smoothed data to reduce inconsistency in training data

- Simulation Camera - skews closest image to simulate new view

- Added web display improvements - search commands, controller keybinds

Week 6: 7/1 - 7/3

Progress:

- Curvature interpolation and calibration

- Trained model using double orange barriers

- Training batches are less 0 biased

- Data augmentation blur artifacts during NN normalization - solid fill

- Simulated driving with ML model and image skewing

- Improvements to Aruco detection: code refactoring, functionality for multiple Arucos

Week 7: 7/8 - 7/11

Progress:

- Rascal Simulator on server

- Updated CAD models for rascal

- YOLO (You Only Look Once) Object detection and distance

- City training data using a constrained path

- Automated testing and evaluation for models

- Aruco Detection Application: integrated into city, testing with pursuit loop

Week 8: 7/15 - 7/18

Progress:

- Spline processing & model, calculates future trajectory based on best fit spline

- Setting up Virtual Machine & documentation

- YOLO - Cone & barrier detection

- Hyperparameter optimization for model

- Automation, testing, and adjustments for aruco detection

Week 9: 7/22 - 7/25

Progress:

- Data loader improvements: separate replaying and data loader, delete data, load multiple sessions at once

- More city training data - lane correction, crash avoidance, orange blocks to limit path

- Efficient automation for city training

- YOLO: traffic light detection, obtaining speed vs accuracy

- Continuing hyperparameter optimization

Week 10: 7/29 - 8/1

Progress:

- Quality of life changes for data collection: camera stream refresh, bag size output

- New hardware for new car: 3D printed parts, fisheye camera, wireless controller

- Fisheye camera: Calibration and undistorting images

- YOLO: custom dataset training, red light finder

- Hyperparameter optimization improvements

- Training city data with the new fisheye camera!

Final Week: 8/5 - 8/7

Attachments (5)

- Detected.png (361.9 KB ) - added by 2 years ago.

- gitlab.png (134.3 KB ) - added by 2 years ago.

- SDC Week 2.png (406.3 KB ) - added by 2 years ago.

-

SDC 2024 WINLAB Poster.png

(2.1 MB

) - added by 22 months ago.

Poster

- SDC Open House 2024 .png (405.3 KB ) - added by 22 months ago.